A common requirement in planning is to be able to produce an audit report, you would think this would be easy as the options are available in the user interface to define which actions to audit.

The problem is that for on-premise planning, once the options have been enabled there is currently no ability through the user interface to produce a report.

The documentation states:

“View results in the HSP_AUDIT_RECORDS table using a RDBMS report writer.”

So not very helpful and a planning administrator may not have access to the planning applications database table “HSP_AUDIT_RECORDS”, this can mean involving a DBA to gain access to the table or have an extract generated, this is not always an easy task.

It is only recently that the ability to view and download audit data was made available in EPM Cloud, so don’t expect this for on-premise any time soon.

Let us first take a quick look at what is available in the cloud, like with on-premise, auditing can be enabled by selecting one or more of the audit types. Once enabled audit data will be displayed in the UI.

There is the ability to filter the different audit types.

The date range can be filtered from a selection of predefined ranges.

The data can then either be viewed or exported and opened in Excel.

Until this functionality is available for on-premise we must look at alternative solutions. As the audit data is stored in one database table I thought maybe using Groovy could come to the rescue again. In a previous post I covered how easy using SQL with Groovy can be, which you can read about here.

I am going to demo the solution first and then go into more detail on how it was put together, it is based on 11.1.2.4 and to be able to use Groovy functionality you need to be on at least Calculation Manager patch 11.1.2.4.006 but I recommend .008+

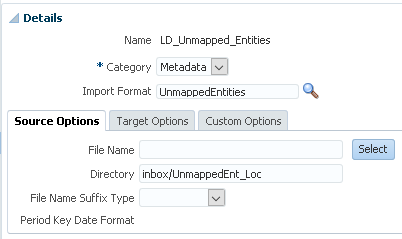

I will be using the simplified interface as that is the closest to cloud, even though still a long way off. It also provides an inbox/outbox explorer which is important for this solution.

I have a business rule which will call a Groovy script to handle the audit data, three runtime prompts are displayed after launching the rule.

The dropdown for audit data type allows the selection of all audit data, all audit data excluding data, or data only.

The final solution allows the selection of all the different audit types similar to the cloud, but for simplicity the example I am going to go through will be based on the above, if you are interested in the final solution then please feel free to get in touch.

Just like with the cloud, a date range can be selected for the audit data.

There is also the option to set a delimiter for the audit data, after making the selections the rule is ready to be launched.

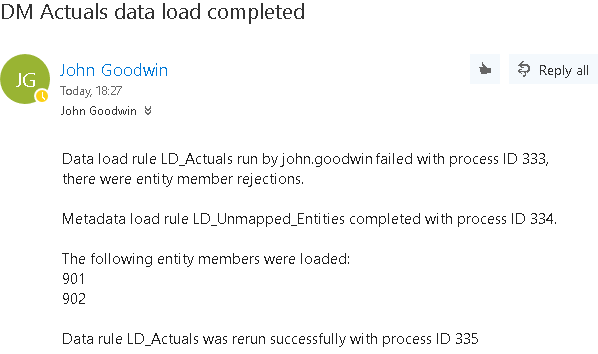

If audit data records are returned for the selected values the rule should run successfully.

After running the rule, you can go to the console.

Then under “Actions” the “Inbox/Outbox Explorer” can be accessed.

There will be will be a timestamped archive file available.

This can then either be downloaded or deleted.

Once downloaded, the zip file can be opened which will contain a text file containing the audit data.

The audit text file can be extracted and viewed in a text editor, the data is ordered by date. I could have changed the column headings but for this example I have stuck with the names of the columns in the database audit table.

Alternatively, it can be opened and viewed in Excel.

The functionality may not be exactly the same as the cloud but in terms of the ability to filter the audit records and then download, it comes pretty close.

The solution includes the ability to archive the audit table which basically means the records in the audit database table are transferred to another table which holds the history, then the audit table is cleared down.

The rule has a runtime prompt included to stop it from being accidentally run.

Finally, there is a form which runs a Groovy based rule on load to display the number of records in the audit table, the form displays the last time the audit table was archived. The rules to either archive the data or produce the audit file are also attached to the form.

So how was this all put together? Well you are going to have to wait until the next part where I will cover it in more detail.

The problem is that for on-premise planning, once the options have been enabled there is currently no ability through the user interface to produce a report.

The documentation states:

“View results in the HSP_AUDIT_RECORDS table using a RDBMS report writer.”

So not very helpful and a planning administrator may not have access to the planning applications database table “HSP_AUDIT_RECORDS”, this can mean involving a DBA to gain access to the table or have an extract generated, this is not always an easy task.

It is only recently that the ability to view and download audit data was made available in EPM Cloud, so don’t expect this for on-premise any time soon.

Let us first take a quick look at what is available in the cloud, like with on-premise, auditing can be enabled by selecting one or more of the audit types. Once enabled audit data will be displayed in the UI.

There is the ability to filter the different audit types.

The date range can be filtered from a selection of predefined ranges.

The data can then either be viewed or exported and opened in Excel.

Until this functionality is available for on-premise we must look at alternative solutions. As the audit data is stored in one database table I thought maybe using Groovy could come to the rescue again. In a previous post I covered how easy using SQL with Groovy can be, which you can read about here.

I am going to demo the solution first and then go into more detail on how it was put together, it is based on 11.1.2.4 and to be able to use Groovy functionality you need to be on at least Calculation Manager patch 11.1.2.4.006 but I recommend .008+

I will be using the simplified interface as that is the closest to cloud, even though still a long way off. It also provides an inbox/outbox explorer which is important for this solution.

I have a business rule which will call a Groovy script to handle the audit data, three runtime prompts are displayed after launching the rule.

The dropdown for audit data type allows the selection of all audit data, all audit data excluding data, or data only.

The final solution allows the selection of all the different audit types similar to the cloud, but for simplicity the example I am going to go through will be based on the above, if you are interested in the final solution then please feel free to get in touch.

Just like with the cloud, a date range can be selected for the audit data.

It doesn’t have to be limited to a predefined range, it could just as easily have the option to select a start and end date.

There is also the option to set a delimiter for the audit data, after making the selections the rule is ready to be launched.

If audit data records are returned for the selected values the rule should run successfully.

After running the rule, you can go to the console.

Then under “Actions” the “Inbox/Outbox Explorer” can be accessed.

There will be will be a timestamped archive file available.

This can then either be downloaded or deleted.

Once downloaded, the zip file can be opened which will contain a text file containing the audit data.

The audit text file can be extracted and viewed in a text editor, the data is ordered by date. I could have changed the column headings but for this example I have stuck with the names of the columns in the database audit table.

Alternatively, it can be opened and viewed in Excel.

The functionality may not be exactly the same as the cloud but in terms of the ability to filter the audit records and then download, it comes pretty close.

The solution includes the ability to archive the audit table which basically means the records in the audit database table are transferred to another table which holds the history, then the audit table is cleared down.

The rule has a runtime prompt included to stop it from being accidentally run.

Finally, there is a form which runs a Groovy based rule on load to display the number of records in the audit table, the form displays the last time the audit table was archived. The rules to either archive the data or produce the audit file are also attached to the form.

So how was this all put together? Well you are going to have to wait until the next part where I will cover it in more detail.